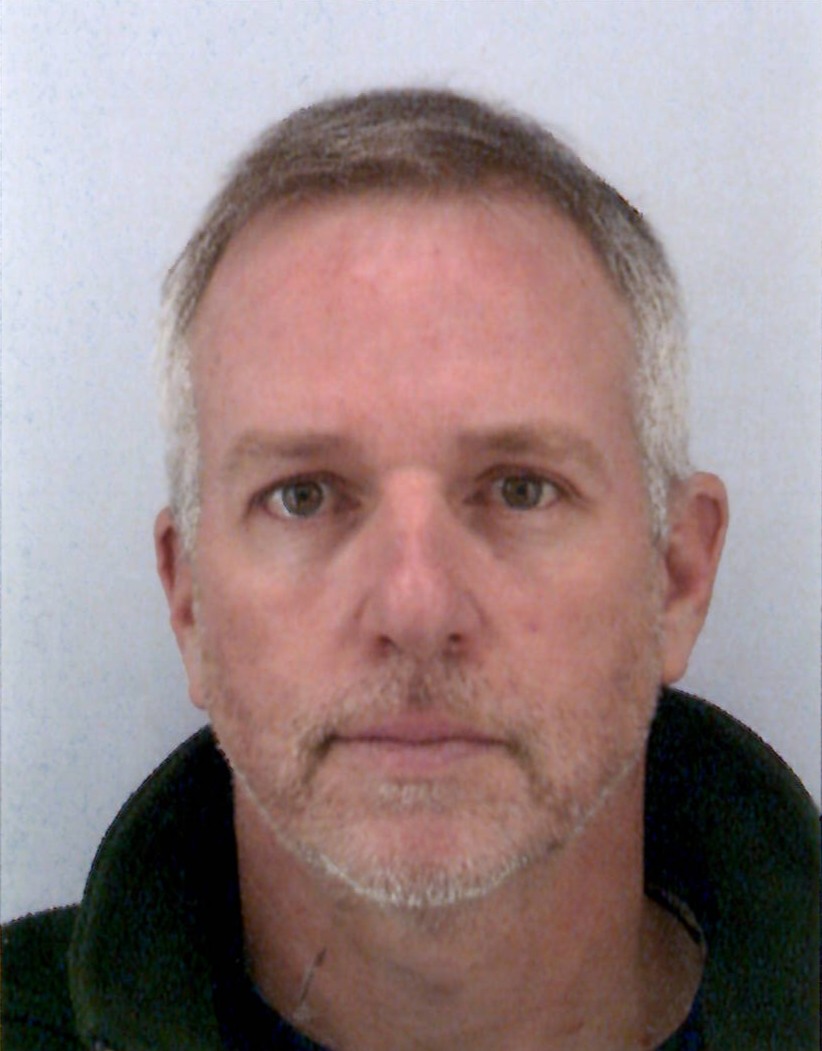

Brief Biography

- I am a CNRS Research Scientist (CRCN - HDR) and candidate for Research Director (DR2).

- Head of the Robot Vision team, focused on bridging the gap between rigorous geometric perception and modern generative learning.

- Based at the I3S Laboratory, Sophia Antipolis, France (CNRS / Université Côte d'Azur).

- My Google Scholar profile: Andrew I. Comport

Background and Position

- 2022 Habilitation à Diriger des Recherches (HDR), Université Côte d'Azur. Title: "Visual SLAM: the Direct Approach".

- 2009 - present CNRS Research Scientist at I3S. Head of the "Robot Vision" team since 2010. Member of the Laboratory Council (2022-present).

- 2015 - 2017 Co-founder and Chief Scientific Officer (CSO) of PIXMAP. Spin-off based on 10 years of CNRS research on dense mapping. Acquired by a major US tech company and deployed in global-scale spatial computing platforms.

- 2007 - 2009 CNRS Research Scientist (CR2) at LASMEA (Clermont-Ferrand), Rosace Team.

- 2005 - 2007 Postdoctoral Researcher (ICARE Project) at INRIA Sophia Antipolis (Urban autonomous navigation).

- 2001 - 2005 PhD in Computer Vision and Robotics at INRIA Rennes / IRISA (Lagadic Project). Advisors: F. Chaumette & E. Marchand.

Research Areas

My research focuses on Ego-centric Spatial AI, organized around three converging axes:

- Generative Spatial AI: Developing foundational models that fuse perception, language, and action. Recent work includes Generative Spherical World Models and Latent Inverse Graphics (NeRFs, Gaussian Splatting) for prediction and simulation.

- Direct Methods & Dense Perception: Formulating visual SLAM through photometric consistency and continuous optimization (Dense Direct Methods) to ensure robustness in dynamic, texture-poor environments.

- Embodied Action & Interaction: Validating spatial models on real-world platforms, including humanoid robots (Airbus), autonomous vehicles (Renault), and underwater multi-agent systems.

Selected Publications (Top 10)

Full publication list available at cv.hal.science/andrew-comport.

- Bringing NeRFs to the Latent Space: Inverse Graphics Autoencoder, A. Schnepf, K. Kassab, J.-Y. Franceschi, L. Caraffa, F. Vasile, J. Mary, A.I. Comport, V. Gouet-Brunet, International Conference on Learning Representations, 2025.

- HFGaussian: Learning Generalizable Gaussian Human with Integrated Human Features, A. Dey, C.Y. Lu, A.I. Comport, S. Sridhar, C.-T. [cite_start]Lin, J. Martinet, IEEE Transactions on Artificial Intelligence, 2025.

- DiVa-360: The Dynamic Visual Dataset for Immersive Neural Fields, C.-Y. Lu, P. Zhou... A.I. [cite_start]Comport... S. Sridhar, IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024. (CVPR Highlight).

- MAM3SLAM: Towards underwater robust multi-agent visual SLAM, J. Drupt, A.I. [cite_start]Comport, C. Dune, V. Hugel, Ocean Engineering, 302, 117643, 2024.

- Instance-Aware Multi-Object Self-Supervision for Monocular Depth Prediction, H.E. Boulahbal, A. Voicila, A.I. [cite_start]Comport, IEEE Robotics and Automation Letters, 7(4), 2022.

- Humanoid Robots in Aircraft Manufacturing: The Airbus Use Cases, A. Kheddar, S. Caron, P. Gergondet, A.I. [cite_start]Comport, et al., IEEE Robotics & Automation Magazine, 27(4), 2020. (Best Paper Award).

- Point-to-hyperplane ICP: fusing different metric measurements for pose estimation, F.I. Ireta Muñoz, A.I. [cite_start]Comport, Advanced Robotics, 32(4), 2018. (Best Student Paper Finalist IROS'16).

- A unified rolling shutter and motion blur model for 3D visual registration, M. Meilland, T. Drummond, A.I. [cite_start]Comport, IEEE ICCV, 2013. (Resulted in 2 international patents acquired by Apple).

- On unifying key-frame and voxel-based dense visual SLAM at large scales, M. Meilland, A.I. [cite_start]Comport, IEEE/RSJ IROS, 2013. (Best Overall Paper Award).

- Real-time Quadrifocal Visual Odometry, A.I. [cite_start]Comport, E. Malis, P. Rives, The International Journal of Robotics Research, 29(2-3), 2010. (Foundational Reference).

Teaching Activities

Over 700h equivalent course contact hours, covering Master 1 and Master 2 levels.

- Visual SLAM (2021-Present): Erasmus Mundus Master (MIR - Marine Intelligent Robotics). Master 2 level.

- 3D Machine Vision (2020-Present): Polytech Nice Sophia (Electrical Engineering / EIT). Master 1 level.

- Machine Vision & Learning (2019-Present): University of Toulon (Robotics & Connected Objects). Master 2 Research level.

- Visual Servoing (2012-2014): University of Nice Sophia-Antipolis (Image & Multimedia VIM). Master 1 level.

- Visual Localisation and Mapping (2011-2014): University of Bourgogne (European MSc in Computer Vision). Master 2 level.

- Previous Courses (2004-2016): Visual Servoing & Digital Imaging (Univ. Rennes 1), RGB-D/Kinect (IUT Nice), and Visual Geometry (Univ. Clermont-Ferrand).